Developers can use the reference project to develop and deploy their own RAG-based applications for RTX, accelerated by TensorRT-LLM. The app is built from the TensorRT-LLM RAG developer reference project, available on GitHub.

Develop LLM-Based Applications With RTXĬhat with RTX shows the potential of accelerating LLMs with RTX GPUs. For the time being, users should use the default installation directory (“C:\Users\\AppData\Local\NVIDIA\ChatWithRTX”). In addition to a GeForce RTX 30 Series GPU or higher with a minimum 8GB of VRAM, Chat with RTX requires Windows 10 or 11, and the latest NVIDIA GPU drivers.Įditor’s note: We have identified an issue in Chat with RTX that causes installation to fail when the user selects a different installation directory. Rather than relying on cloud-based LLM services, Chat with RTX lets users process sensitive data on a local PC without the need to share it with a third party or have an internet connection. Since Chat with RTX runs locally on Windows RTX PCs and workstations, the provided results are fast - and the user’s data stays on the device. Chat with RTX can integrate knowledge from YouTube videos into queries. For example, ask for travel recommendations based on content from favorite influencer videos, or get quick tutorials and how-tos based on top educational resources. Adding a video URL to Chat with RTX allows users to integrate this knowledge into their chatbot for contextual queries. Users can also include information from YouTube videos and playlists. Point the application at the folder containing these files, and the tool will load them into its library in just seconds. The tool supports various file formats, including.

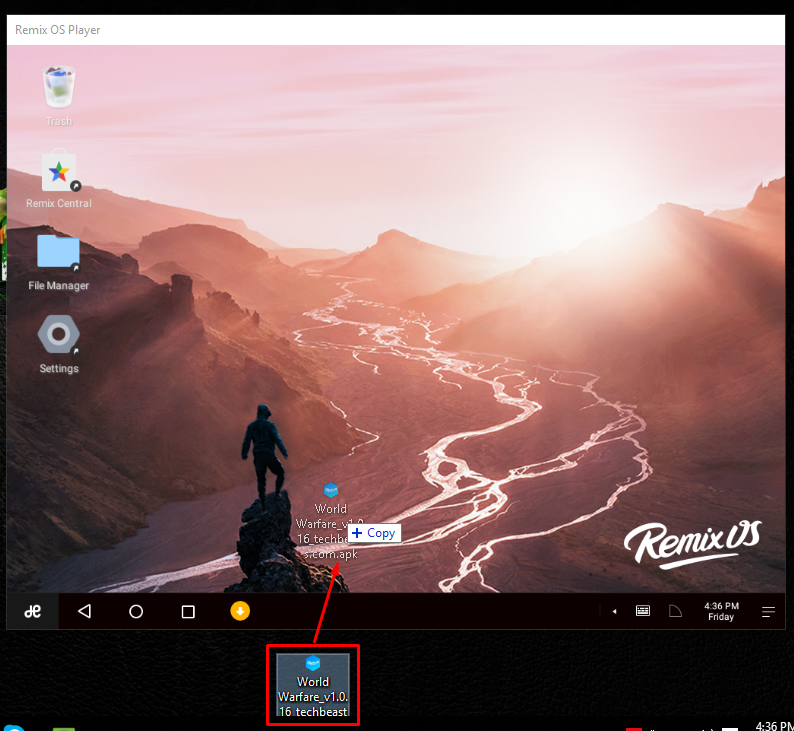

For example, one could ask, “What was the restaurant my partner recommended while in Las Vegas?” and Chat with RTX will scan local files the user points it to and provide the answer with context. Rather than searching through notes or saved content, users can simply type queries. Users can quickly, easily connect local files on a PC as a dataset to an open-source large language model like Mistral or Llama 2, enabling queries for quick, contextually relevant answers. Ask Me AnythingĬhat with RTX uses retrieval-augmented generation (RAG), NVIDIA TensorRT-LLM software and NVIDIA RTX acceleration to bring generative AI capabilities to local, GeForce-powered Windows PCs. Now, these groundbreaking tools are coming to Windows PCs powered by NVIDIA RTX for local, fast, custom generative AI.Ĭhat with RTX, now free to download, is a tech demo that lets users personalize a chatbot with their own content, accelerated by a local NVIDIA GeForce RTX 30 Series GPU or higher with at least 8GB of video random access memory, or VRAM. It is better to use the resident mode.Chatbots are used by millions of people around the world every day, powered by NVIDIA GPU-based cloud servers. Now the installation of Remix OS will start and you will have the option to boot as a guest mode where your sessions are not saved or create a resident mode where all data will be saved. Once you have enabled this, the PC will boot from the USB drive.Ħ. Also, you will need to enable Legacy boot on your PC to boot Remix OS. Each PC has different BIOS settings, so you need to check on how to change the boot order of your PC and then select the USB drive as the first boot device. Once the installation is complete, you need to reboot your PC and then select USB as the booting option from the BIOS.ĥ. This will start the installation process in the USB drive.Ĥ. Select the ISO file in the app and also the USB disk. Once the format is completed, run the Remix OS USB tool which you have downloaded in step 1. Now, right click on the pen drive and select format. In the next step, you need to prepare the USB pen drive for installation.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed